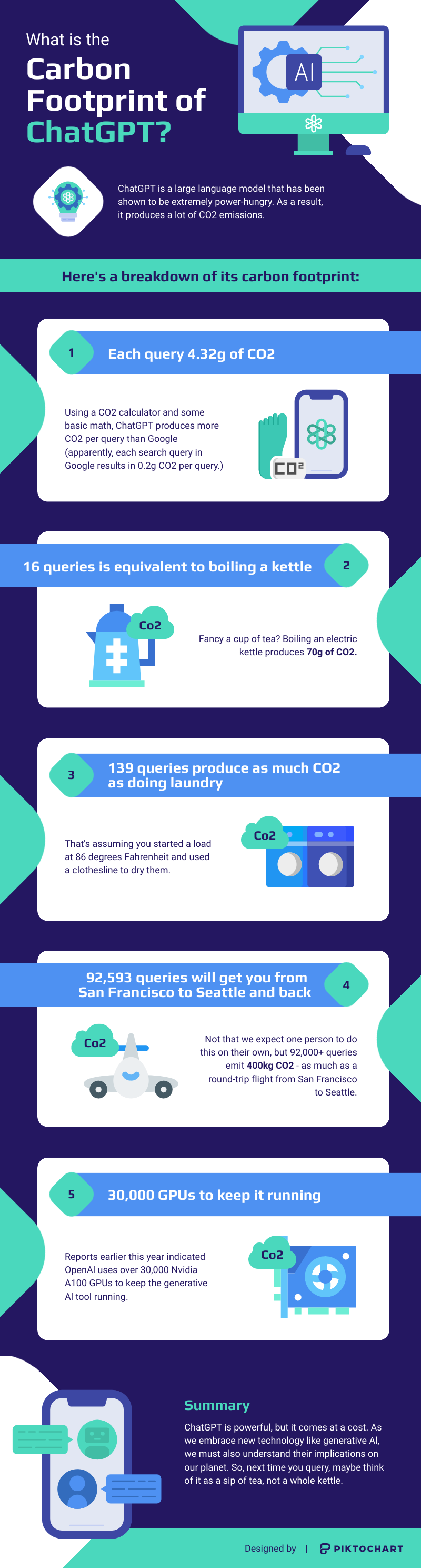

Every message you send to ChatGPT produces roughly 4.32 grams of CO2. One query barely registers. Scale it across 200 million weekly active users, and the carbon footprint of ChatGPT becomes a measurable force in global emissions.

A single ChatGPT request consumes four to five times more energy than a standard Google search. With an estimated one billion daily queries, the electricity draw alone could rival small nations by 2027.

Early estimates pegged ChatGPT’s annual output at 8.4 tons of CO2, double what an average person generates in a year. Those calculations assumed the system ran on 16 GPUs. In reality, OpenAI operates a minimum of 30,000 enterprise-grade A100 units. The real carbon cost is almost certainly larger than any public figure suggests.

Water consumption adds another layer. Training the model required enough water to manufacture 370 BMWs. The data centers powering daily operations are projected to match the electricity usage of Sweden or the Netherlands within the next few years.

Below, we break down what those numbers mean per query, how training and operational costs compare, and what steps are being taken to shrink AI’s environmental impact.

AI’s Invisible Exhaust: The Cost of Using ChatGPT

Before we proceed, we need to make a disclaimer. Our statistic is an oversimplified calculation based on public data. If you want to see our homework, we’ll share it towards the end of the article.

For now, let’s dive deeper into what 4.32g of CO2 emissions per query means.

A single query in a conversation won’t shift the needle. But interacting with ChatGPT is usually a to-and-fro affair.

Here’s what the equivalent CO2 usage is by query:

- 15 queries = watching one hour of videos

- 16 queries = boiling one kettle

- 20-50 queries is the equivalent of consuming 500ml of water.

- 139 queries = one load of laundry washed at 86 degrees Fahrenheit, then dried on a clothesline

- 92,593 queries = a round-trip flight from San Francisco to Seattle (according to this calculator)

Now, a single person wouldn’t be able to get through those numbers in a day. But given that there were an average of 50 million unique visits per day to the site in July, the number of queries suddenly ramps up. If each unique visit resulted in 10 queries on average, you’d have 15 trillion queries each month.

The numbers are dizzying, but you can see how easy it is to ramp up the carbon footprint of ChatGPT as more people use it.

How we calculated the information

If you’re wondering how we arrived at our CO2 figure, here’s how we came to our calculation.

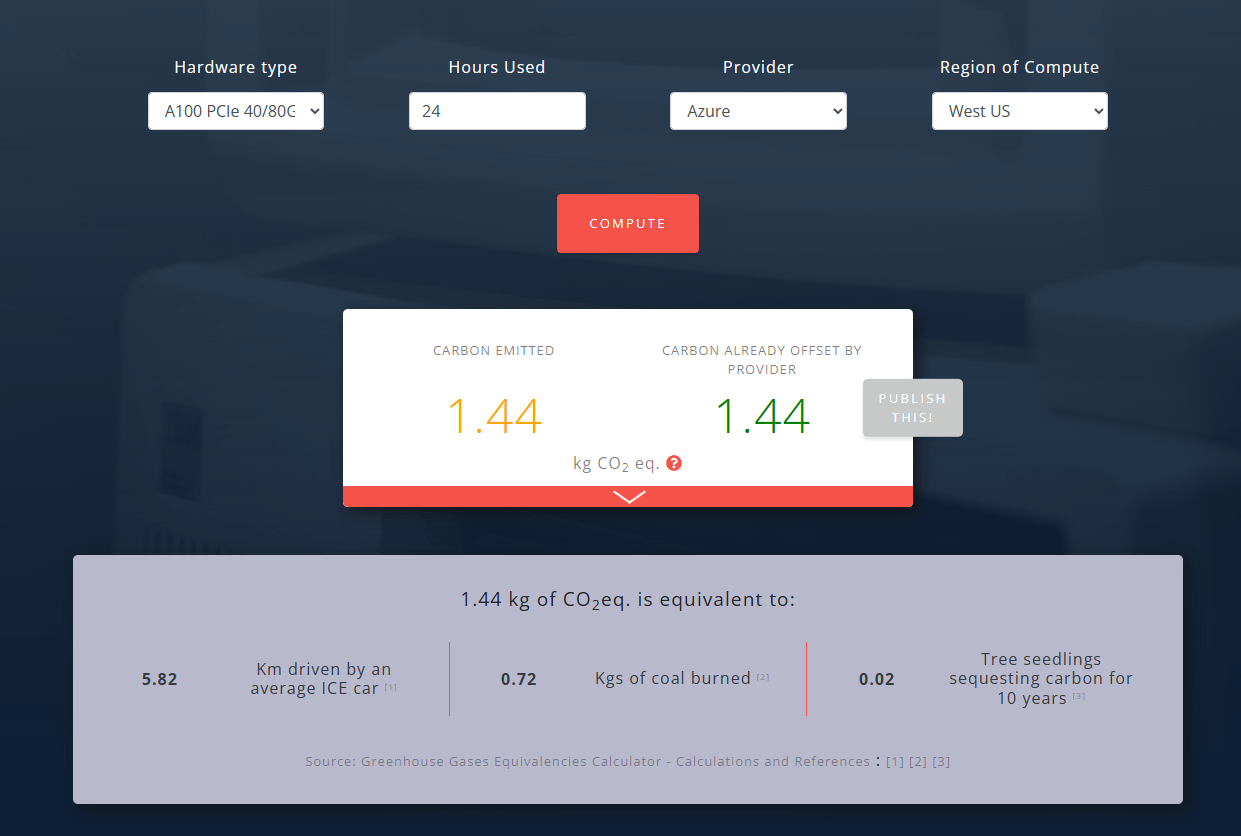

- Open AI uses Microsoft Azure cloud infrastructure

- According to Nvidia, they use enterprise-grade A100 GPUs

- When run continuously over 24 hours, the tool calculated that each GPU emitted 1.44kg of CO2 per day

While the exact number is obscure, reports indicate that ChatGPT is estimated to run on 30,000 GPUs per day. This is a conservative measure, and the true number of GPUs deployed to keep the generative AI tool running is likely higher.

- If we assume a minimum of 30,000 GPUs are in use, that means 43,200kg CO2 is being emitted daily.

- We know that ChatGPT received 10 million queries per day during launch week in November 2022. This is likely much higher at time of writing, but without official figures, we’ll use this as our baseline.

- 43,200kg CO2/10 million queries = 4.32g CO2 per search query

Not all doom and gloom

However, it’s not all cloudy skies on the horizon. The future could be brighter with talks of OpenAI developing their own energy-efficient chips, as well as continuous advancements in hardware and AI optimization.

OpenAI has also shown they’re doing their part to lower their carbon footprint, opting to use Microsoft’s Azure cloud provider, which is carbon neutral.

They were also intentional about using the A100 GPUs, which are 5x more energy efficient than CPU systems for generative AI applications.

How to Reduce AI’s Carbon Footprint

The environmental cost of AI is real, but it is not fixed. Researchers, companies, and individual users can each push the number downward.

Cleaner energy for data centers. The single largest lever is the power source behind the servers. Microsoft has committed to running Azure on 100% renewable energy by 2025. Google has matched its global operations with renewable energy purchases since 2017. When a data center runs on wind or solar instead of coal, the CO2 per query drops by as much as 80%. Location matters: training a model in France (nuclear-heavy grid) produced only 25 tons of CO2, while the same model trained on a US grid emitted over 500 tons.

More efficient hardware. GPU architecture continues to improve. Nvidia’s H100 chips deliver roughly three times the performance per watt of the A100 chips currently powering ChatGPT. Google’s TPU v4 processors are purpose-built for AI workloads and use less energy per operation than general-purpose hardware. Upgrading infrastructure directly reduces the energy consumed per query.

Smaller, smarter models. Not every task requires a model with hundreds of billions of parameters. Techniques like model distillation, pruning, and quantization produce lighter versions capable of handling routine prompts at a fraction of the energy cost. OpenAI’s GPT-4o mini, for example, uses roughly 60% less energy per request than the full GPT-4 model.

User-level choices. Shorter prompts, fewer unnecessary follow-ups, and selecting smaller model tiers when precision is not critical can reduce per-session emissions. Turning off plugins and browsing features when they are not needed saves additional compute cycles.

The path forward requires progress on every front. No single fix eliminates the problem, but each improvement compounds across billions of daily interactions.